EXPLAINによるMySQLの実行計画の確認

担当しているソフトウェアで、運用しているとMySQL内のレコードが増えて性能が悪化するというお問い合わせをいただきました。 そこで、Indexを改善しようということになったのですが、どこにIndexを付けるべきか確認するためにMySQLの実行計画を確認しました。

自分のバックグラウンドとしては、データベースは特別詳しいわけではないので、 Indexは論理的に付けてはいるものの、それがあっているのかどうか確証はありませんでした。

で、調べたところMySQLでも実行計画が確認できるみたいなので、今回、それを使って確認を行ってみました。

MySQL :: MySQL 5.6 リファレンスマニュアル :: 8.8 クエリー実行プランの理解

対象のテーブル

こんなテーブルです。ユーザーのセッション情報を格納するためのテーブルで、ログアウトされないと、レコードが蓄積されていきます。

MySQL [apppot]> desc UserSession; +-----------------+--------------+------+-----+---------+-------+ | Field | Type | Null | Key | Default | Extra | +-----------------+--------------+------+-----+---------+-------+ | userToken | varchar(255) | NO | PRI | NULL | | | appTableId | bigint(20) | YES | | NULL | | | companyId | bigint(20) | YES | | NULL | | | deviceUDID | varchar(255) | YES | | NULL | | | loginDate | datetime | YES | | NULL | | | tokenExpireDate | datetime | YES | | NULL | | | userId | bigint(20) | YES | | NULL | | +-----------------+--------------+------+-----+---------+-------+ 7 rows in set (0.05 sec)

こんな感じのSQLが実行されます。

select * from UserSession where userId=205 and appTableId=4 and deviceUDID='apppotsdkjs' and tokenExpireDate>='2018-01-12 22:16:13';

MySQLの実行計画の確認は手軽で、EXPLAINを頭に付けるだけでOKです。

Index追加前

rowsに122381という値が入っていますが、いわゆるフルスキャンが発生して、全部のレコードをチェックしていることがわかりました。そりゃ遅いですね。

MySQL [apppot]> EXPLAIN select * from UserSession where userId=205 and appTableId=4 and deviceUDID='apppotsdkjs' and tokenExpireDate>='2018-01-12 22:16:13'; +----+-------------+-------------+------+---------------+------+---------+------+--------+-------------+ | id | select_type | table | type | possible_keys | key | key_len | ref | rows | Extra | +----+-------------+-------------+------+---------------+------+---------+------+--------+-------------+ | 1 | SIMPLE | UserSession | ALL | NULL | NULL | NULL | NULL | 122381 | Using where | +----+-------------+-------------+------+---------------+------+---------+------+--------+-------------+ 1 row in set (0.01 sec)

userIdにIndexを追加してみる

試しにuserIdにIndexを追加してみます。 ログインするユーザーが十分バラけていれば、これでも早くなりそうな感じがします。

ALTER TABLE UserSession ADD INDEX index_userId(userId);

実行計画はこんな感じ。チェックする対象のrowsの値が1/4になってますね。

MySQL [apppot]> EXPLAIN select * from UserSession where userId=205 and appTableId=4 and deviceUDID='apppotsdkjs' and tokenExpireDate>='2018-01-12 22:16:13'; +----+-------------+-------------+------+---------------+--------------+---------+-------+-------+-------------+ | id | select_type | table | type | possible_keys | key | key_len | ref | rows | Extra | +----+-------------+-------------+------+---------------+--------------+---------+-------+-------+-------------+ | 1 | SIMPLE | UserSession | ref | index_userId | index_userId | 9 | const | 33436 | Using where | +----+-------------+-------------+------+---------------+--------------+---------+-------+-------+-------------+ 1 row in set (0.00 sec)

複数カラムのIndex

MySQLは複数のIndexあっても、どれか1つしか使ってくれません。 そこで、複数のカラムで1つのIndexを作ることができます。遅いSQLの条件で使っているカラムの組み合わせてIndexを作ります。

ALTER TABLE UserSession ADD INDEX index_get_session(userId, appTableId, deviceUDID, tokenExpireDate);

実行計画を見てみましょう。rowsの値が3桁減ってますね。 possible_keysが使用可能なIndex、keyが実際に使われたIndexということのようです。 新しく付けたindex_get_sessionが使われてますね。

MySQL [apppot]> EXPLAIN select * from UserSession where userId=205 and appTableId=4 and deviceUDID='apppotsdkjs' and tokenExpireDate>='2018-01-12 22:16:13'; +----+-------------+-------------+-------+--------------------------------+-------------------+---------+------+------+-----------------------+ | id | select_type | table | type | possible_keys | key | key_len | ref | rows | Extra | +----+-------------+-------------+-------+--------------------------------+-------------------+---------+------+------+-----------------------+ | 1 | SIMPLE | UserSession | range | index_userId,index_get_session | index_get_session | 792 | NULL | 258 | Using index condition | +----+-------------+-------------+-------+--------------------------------+-------------------+---------+------+------+-----------------------+ 1 row in set (0.08 sec)

以上です。

macOSにTensorFlowをセットアップする (2018/04更新)

マニュアルInstalling TensorFlow on macOS | TensorFlowでオススメされているvirtualenvを使った、セットアップを行います。

このドキュメントは2018/04/29にMacBook Pro 2017年モデルに環境構築をするために、再確認して更新しています。

使用した環境

- macOS High Sierra

- Tensorflow 1.8

- pip

Pythonのパッケージ管理ツール - Virtualenv

Virtualenv — virtualenv 15.1.0 documentation

Virtualenvは、独立したPython実行環境を作ることができるツールです。Virtualenvを使うことで、他の環境から影響を受けたり、与えたりすることがないので、好き放題実験することができます。

事前準備

macOSにHomebrewのセットアップを行っています。

1. Python環境の構築

pyenvでPythonの複数環境を構築して、Python3をインストールしていきます。

1.1. pyenvをHomebrewでインストール

$ brew install pyenv

1.2. Python3をインストール

利用できるPythonのバージョンを確認

$ pyenv install -l Available versions: 2.1.3 2.2.3 (略) 3.6.2 3.6.3 3.6.4 3.6.5 3.7.0b2 3.7-dev 3.8-dev activepython-2.7.14 (略)

Python 3をインストール

$ pyenv install 3.6.5 python-build: use openssl from homebrew python-build: use readline from homebrew Downloading Python-3.6.5.tar.xz... -> https://www.python.org/ftp/python/3.6.5/Python-3.6.5.tar.xz Installing Python-3.6.5... python-build: use readline from homebrew Installed Python-3.6.5 to /Users/rsogo/.pyenv/versions/3.6.5

2. Virtualenvのインストール

pipを使ってVirtualenvをインストールします。

--upgradeは関連パッケージに新しいバージョンがある場合は、更新するというオプションです。

$ sudo pip install --upgrade virtualenv

The directory '/Users/rsogo/Library/Logs/pip' or its parent directory is not owned by the current user and the debug log has been disabled. Please check the permissions and owner of that directory. If executing pip with sudo, you may want the -H flag.

The directory '/Users/rsogo/Library/Caches/pip/http' or its parent directory is not owned by the current user and the cache has been disabled. Please check the permissions and owner of that directory. If executing pip with sudo, you may want the -H flag.

You are using pip version 6.0.6, however version 9.0.1 is available.

You should consider upgrading via the 'pip install --upgrade pip' command.

The directory '/Users/rsogo/Library/Caches/pip/http' or its parent directory is not owned by the current user and the cache has been disabled. Please check the permissions and owner of that directory. If executing pip with sudo, you may want the -H flag.

Collecting virtualenv

Downloading virtualenv-15.1.0-py2.py3-none-any.whl (1.8MB)

100% |################################| 1.8MB 1.9MB/s

Installing collected packages: virtualenv

Successfully installed virtualenv-15.1.0

3. Tensorflowの環境を作る

3.1 VirtualenvでTensorflowの環境を作る

-pオプションでセットアップしたpython3の環境を指定して、Virtualenv環境を作ります。

tensorflowというディレクトリをターゲットとしています。ディレクトリ名は任意です。

rsogo-Mac-Book-2:~ rsogo$ virtualenv --system-site-packages -p ~/.pyenv/versions/3.6.5/bin/python3.6 tensorflow Running virtualenv with interpreter /Users/rsogo/.pyenv/versions/3.6.5/bin/python3.6 Using base prefix '/Users/rsogo/.pyenv/versions/3.6.5' New python executable in /Users/rsogo/tensorflow/bin/python3.6 Also creating executable in /Users/rsogo/tensorflow/bin/python Installing setuptools, pip, wheel...done.

3.2. Virtualenv環境を有効にする

cd tensorflow/ $ source bin/activate (tensorflow) Ryohei-no-MacBook-Pro:tensorflow rsogo$

これで、作ったVirtualenv環境に入っています

3.3. Tensorflowのインストール

$ pip3 install --upgrade tensorflow

Collecting tensorflow

Downloading tensorflow-1.2.1-cp36-cp36m-macosx_10_11_x86_64.whl (34.1MB)

100% |████████████████████████████████| 34.1MB 37kB/s

(略)

Successfully built protobuf markdown html5lib

Installing collected packages: six, protobuf, numpy, markdown, backports.weakref, html5lib, bleach, werkzeug, tensorflow

Successfully installed backports.weakref-1.0rc1 bleach-1.5.0 html5lib-0.9999999 markdown-2.6.8 numpy-1.13.1 protobuf-3.3.0 six-1.10.0 tensorflow-1.2.1 werkzeug-0.12.2

4. 動作確認

4.1. チュートリアルをやってみる

下記のソースをtutorial1.pyという名前で作成します。

import tensorflow as tf

node1 = tf.constant(3.0, dtype=tf.float32)

node2 = tf.constant(4.0) # also tf.float32 implicitly

print(node1, node2)

sess = tf.Session()

print(sess.run([node1, node2]))

node3 = tf.add(node1, node2)

print("node3: ", node3)

print("sess.run(node3): ", sess.run(node3))

4.2. 実行してみます

$ python tutorial1.py

Tensor("Const:0", shape=(), dtype=float32) Tensor("Const_1:0", shape=(), dtype=float32)

2017-08-06 00:32:11.351356: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations.

2017-08-06 00:32:11.351380: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX instructions, but these are available on your machine and could speed up CPU computations.

2017-08-06 00:32:11.351385: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX2 instructions, but these are available on your machine and could speed up CPU computations.

2017-08-06 00:32:11.351390: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use FMA instructions, but these are available on your machine and could speed up CPU computations.

[3.0, 4.0]

node3: Tensor("Add:0", shape=(), dtype=float32)

sess.run(node3): 7.0

動いてそうですね。

MySQL(RDS)へのデータのインポート

RDSへ、Excelでもらったマスタデータを取り込む時にいろいろとハマったので書いておこうと思います。

RDSでmysqlimportは使えない、LOAD DATA LOCAL INFILEを使う

まさにこちらの記事と同じ手順を辿りました。

RDSでcsvファイルをインポートしようとして権限エラーになったときの対応 - Qiita

インポートしたデータが文字化けする

mysql> show variables like '%char%'; +--------------------------+-------------------------------------------+ | Variable_name | Value | +--------------------------+-------------------------------------------+ | character_set_client | utf8 | | character_set_connection | utf8 | | character_set_database | latin1 | | character_set_filesystem | binary | | character_set_results | utf8 | | character_set_server | latin1 | | character_set_system | utf8 | | character_sets_dir | /rdsdbbin/mysql-5.6.34.R1/share/charsets/ | +--------------------------+-------------------------------------------+ 8 rows in set (0.00 sec)

LOAD DATA INFILEはcharacter_set_databaseを使っているようです。

上記の場合、latin1になっていることが原因でした。utf8に変える必要がありました。

set character_set_databaseで、変更ができます。

mysql> set character_set_database = utf8; Query OK, 0 rows affected (0.00 sec) mysql> show variables like '%char%'; +--------------------------+-------------------------------------------+ | Variable_name | Value | +--------------------------+-------------------------------------------+ | character_set_client | utf8 | | character_set_connection | utf8 | | character_set_database | utf8 | | character_set_filesystem | binary | | character_set_results | utf8 | | character_set_server | latin1 | | character_set_system | utf8 | | character_sets_dir | /rdsdbbin/mysql-5.6.34.R1/share/charsets/ | +--------------------------+-------------------------------------------+ 8 rows in set (0.00 sec)

参考にさせていただきました

MySQLのload data infile文で文字化けする場合の対策 | クレストボウズ::技術者ブログ

CSVデータのインポート

LOAD DATA LOCAL INFILE '/tmp/data.csv' INTO TABLE CP FIELDS TERMINATED BY ',' OPTIONALLY ENCLOSED BY '"' LINES TERMINATED BY '\r\n';

Raspberry PiにnodeとReactを入れる

冬休みの勉強でRaspberry Piにnode入れて、なんかいろいろやろうと思います。

cpuinfoでCPUの確認

pi@raspberrypi:~$ cat /proc/cpuinfo processor : 0 model name : ARMv7 Processor rev 4 (v7l) BogoMIPS : 38.40 Features : half thumb fastmult vfp edsp neon vfpv3 tls vfpv4 idiva idivt vfpd32 lpae evtstrm crc32 CPU implementer : 0x41 CPU architecture: 7 CPU variant : 0x0 CPU part : 0xd03 CPU revision : 4 (Coreは4つありますが、同じなので省略) Hardware : BCM2709 Revision : a02082 Serial : 00000000dad0288f

ARMv7ですね。

nodeのインストール

インストールはLinuxの場合はCPUに合わせてバイナリをダウンロードして、解凍するだけでした。今回はさっき調べてARMv7と分かっているので、対応したファイルを使います。

pi@raspberrypi:~$ wget https://nodejs.org/dist/v6.9.2/node-v6.9.2-linux-armv7l.tar.xz pi@raspberrypi:~$ tar xvf node-v6.9.2-linux-armv7l.tar.xz pi@raspberrypi:~$ sudo su - root@raspberrypi:~# mkdir /opt/node/ root@raspberrypi:~# cp -r /home/pi/Downloads/node-v6.9.2-linux-armv7l/* /opt/node/

バージョンの確認をします。

pi@raspberrypi:~/works$ node -v v6.9.2 pi@raspberrypi:~/works$ npm -v 3.10.9

Reactを入れてみます

まぁここから先はnpmがよろしくやってくれるので、単なる記録です。

pi@raspberrypi:~/works$ sudo /opt/node/bin/npm install -g create-react-app /usr/bin/create-react-app -> /usr/lib/node_modules/create-react-app/index.js /usr/lib └── create-react-app@1.0.2

Create React Appでプロジェクトを作れます

pi@raspberrypi:~/works$ create-react-app hellow-world

Creating a new React app in /home/pi/works/hellow-world.

Installing packages. This might take a couple minutes.

Installing react-scripts...

npm WARN prefer global marked@0.3.6 should be installed with -g

hellow-world@0.1.0 /home/pi/works/hellow-world

└─┬ react-scripts@0.8.4

├─┬ autoprefixer@6.5.1

(中略)

Success! Created hellow-world at /home/pi/works/hellow-world

Inside that directory, you can run several commands:

npm start

Starts the development server.

npm run build

Bundles the app into static files for production.

npm test

Starts the test runner.

npm run eject

Removes this tool and copies build dependencies, configuration files

and scripts into the app directory. If you do this, you can’t go back!

We suggest that you begin by typing:

cd hellow-world

npm start

プロジェクトのビルド

pi@raspberrypi:~/works/hellow-world$ npm run build > hellow-world@0.1.0 build /home/pi/works/hellow-world > react-scripts build Creating an optimized production build... Compiled successfully. File sizes after gzip: 45.92 KB build/static/js/main.4602eddc.js 289 B build/static/css/main.9a0fe4f1.css The project was built assuming it is hosted at the server root. To override this, specify the homepage in your package.json. For example, add this to build it for GitHub Pages: "homepage": "http://myname.github.io/myapp", The build folder is ready to be deployed. You may also serve it locally with a static server: npm install -g pushstate-server pushstate-server build open http://localhost:9000

プロジェクトの実行

pi@raspberrypi:~/works/hellow-world$ npm start Compiled successfully! The app is running at: http://localhost:3000/ Note that the development build is not optimized. To create a production build, use npm run build.

Raspberry Biのアドレスと3000番ポートにアクセスするとサンプルが実行されているのが確認できました。

Webサーバーを入れる

今後のことも考えて、単独で実行できるhttp-serverも入れておきます。

root@raspberrypi:~# npm install -g http-server

npm WARN npm npm does not support Node.js v0.10.29

npm WARN npm You should probably upgrade to a newer version of node as we

npm WARN npm can't make any promises that npm will work with this version.

npm WARN npm You can find the latest version at https://nodejs.org/

/usr/bin/http-server -> /usr/lib/node_modules/http-server/bin/http-server

/usr/bin/hs -> /usr/lib/node_modules/http-server/bin/http-server

/usr/lib

└─┬ http-server@0.9.0

├── colors@1.0.3

├── corser@2.0.1

├─┬ ecstatic@1.4.1

│ ├── he@0.5.0

│ ├── mime@1.3.4

│ ├── minimist@1.2.0

│ └── url-join@1.1.0

├─┬ http-proxy@1.16.2

│ ├── eventemitter3@1.2.0

│ └── requires-port@1.0.0

├── opener@1.4.2

├─┬ optimist@0.6.1

│ ├── minimist@0.0.10

│ └── wordwrap@0.0.3

├─┬ portfinder@0.4.0

│ ├── async@0.9.0

│ └─┬ mkdirp@0.5.1

│ └── minimist@0.0.8

└─┬ union@0.4.6

└── qs@2.3.3

Webサーバーの起動

ドキュメントルートにしたい場所まで移動して、起動します。

pi@raspberrypi:~$ cd works/hellow-world/ pi@raspberrypi:~/works/hellow-world$ http-server Starting up http-server, serving ./public Available on: http://127.0.0.1:8080 http://192.168.10.11:8080 Hit CTRL-C to stop the server

これで他の端末からhttp://192.168.10.11:8080/でRaspberry Pi上のWebサーバーにアクセスできます。

MacOSXへのApache Kafkaのセットアップ

0.10.0.1を入れます。

1. Zookeeperの起動

$ bin/zookeeper-server-start.sh config/zookeeper.properties Picked up JAVA_TOOL_OPTIONS: -Dfile.encoding=UTF-8 [2016-10-09 22:17:15,900] INFO Reading configuration from: config/zookeeper.properties (org.apache.zookeeper.server.quorum.QuorumPeerConfig) [2016-10-09 22:17:15,903] INFO autopurge.snapRetainCount set to 3 (org.apache.zookeeper.server.DatadirCleanupManager) [2016-10-09 22:17:15,905] INFO autopurge.purgeInterval set to 0 (org.apache.zookeeper.server.DatadirCleanupManager) [2016-10-09 22:17:15,906] INFO Purge task is not scheduled. (org.apache.zookeeper.server.DatadirCleanupManager) [2016-10-09 22:17:15,906] WARN Either no config or no quorum defined in config, running in standalone mode (org.apache.zookeeper.server.quorum.QuorumPeerMain) [2016-10-09 22:17:15,930] INFO Reading configuration from: config/zookeeper.properties (org.apache.zookeeper.server.quorum.QuorumPeerConfig) [2016-10-09 22:17:15,930] INFO Starting server (org.apache.zookeeper.server.ZooKeeperServerMain) (中略) [2016-10-09 22:17:15,991] INFO tickTime set to 3000 (org.apache.zookeeper.server.ZooKeeperServer) [2016-10-09 22:17:15,991] INFO minSessionTimeout set to -1 (org.apache.zookeeper.server.ZooKeeperServer) [2016-10-09 22:17:15,991] INFO maxSessionTimeout set to -1 (org.apache.zookeeper.server.ZooKeeperServer) [2016-10-09 22:17:16,015] INFO binding to port 0.0.0.0/0.0.0.0:2181 (org.apache.zookeeper.server.NIOServerCnxnFactory)

2. Kafkaの起動

$ bin/kafka-server-start.sh config/server.properties

Picked up JAVA_TOOL_OPTIONS: -Dfile.encoding=UTF-8

[2016-10-09 22:18:41,676] INFO KafkaConfig values:

advertised.host.name = null

metric.reporters = []

quota.producer.default = 9223372036854775807

offsets.topic.num.partitions = 50

log.flush.interval.messages = 9223372036854775807

auto.create.topics.enable = true

controller.socket.timeout.ms = 30000

log.flush.interval.ms = null

principal.builder.class = class org.apache.kafka.common.security.auth.DefaultPrincipalBuilder

replica.socket.receive.buffer.bytes = 65536

min.insync.replicas = 1

replica.fetch.wait.max.ms = 500

num.recovery.threads.per.data.dir = 1

ssl.keystore.type = JKS

sasl.mechanism.inter.broker.protocol = GSSAPI

default.replication.factor = 1

ssl.truststore.password = null

log.preallocate = false

sasl.kerberos.principal.to.local.rules = [DEFAULT]

fetch.purgatory.purge.interval.requests = 1000

ssl.endpoint.identification.algorithm = null

replica.socket.timeout.ms = 30000

message.max.bytes = 1000012

num.io.threads = 8

offsets.commit.required.acks = -1

log.flush.offset.checkpoint.interval.ms = 60000

delete.topic.enable = false

quota.window.size.seconds = 1

ssl.truststore.type = JKS

offsets.commit.timeout.ms = 5000

quota.window.num = 11

zookeeper.connect = localhost:2181

authorizer.class.name =

num.replica.fetchers = 1

log.retention.ms = null

log.roll.jitter.hours = 0

log.cleaner.enable = true

offsets.load.buffer.size = 5242880

log.cleaner.delete.retention.ms = 86400000

ssl.client.auth = none

controlled.shutdown.max.retries = 3

queued.max.requests = 500

offsets.topic.replication.factor = 3

log.cleaner.threads = 1

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

socket.request.max.bytes = 104857600

ssl.trustmanager.algorithm = PKIX

zookeeper.session.timeout.ms = 6000

log.retention.bytes = -1

log.message.timestamp.type = CreateTime

sasl.kerberos.min.time.before.relogin = 60000

zookeeper.set.acl = false

connections.max.idle.ms = 600000

offsets.retention.minutes = 1440

replica.fetch.backoff.ms = 1000

inter.broker.protocol.version = 0.10.0-IV1

log.retention.hours = 168

num.partitions = 1

broker.id.generation.enable = true

listeners = null

ssl.provider = null

ssl.enabled.protocols = [TLSv1.2, TLSv1.1, TLSv1]

log.roll.ms = null

log.flush.scheduler.interval.ms = 9223372036854775807

ssl.cipher.suites = null

log.index.size.max.bytes = 10485760

ssl.keymanager.algorithm = SunX509

security.inter.broker.protocol = PLAINTEXT

replica.fetch.max.bytes = 1048576

advertised.port = null

log.cleaner.dedupe.buffer.size = 134217728

replica.high.watermark.checkpoint.interval.ms = 5000

log.cleaner.io.buffer.size = 524288

sasl.kerberos.ticket.renew.window.factor = 0.8

zookeeper.connection.timeout.ms = 6000

controlled.shutdown.retry.backoff.ms = 5000

log.roll.hours = 168

log.cleanup.policy = delete

host.name =

log.roll.jitter.ms = null

max.connections.per.ip = 2147483647

offsets.topic.segment.bytes = 104857600

background.threads = 10

quota.consumer.default = 9223372036854775807

request.timeout.ms = 30000

log.message.format.version = 0.10.0-IV1

log.index.interval.bytes = 4096

log.dir = /tmp/kafka-logs

log.segment.bytes = 1073741824

log.cleaner.backoff.ms = 15000

offset.metadata.max.bytes = 4096

ssl.truststore.location = null

group.max.session.timeout.ms = 300000

ssl.keystore.password = null

zookeeper.sync.time.ms = 2000

port = 9092

log.retention.minutes = null

log.segment.delete.delay.ms = 60000

log.dirs = /tmp/kafka-logs

controlled.shutdown.enable = true

compression.type = producer

max.connections.per.ip.overrides =

log.message.timestamp.difference.max.ms = 9223372036854775807

sasl.kerberos.kinit.cmd = /usr/bin/kinit

log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308

auto.leader.rebalance.enable = true

leader.imbalance.check.interval.seconds = 300

log.cleaner.min.cleanable.ratio = 0.5

replica.lag.time.max.ms = 10000

num.network.threads = 3

ssl.key.password = null

reserved.broker.max.id = 1000

metrics.num.samples = 2

socket.send.buffer.bytes = 102400

ssl.protocol = TLS

socket.receive.buffer.bytes = 102400

ssl.keystore.location = null

replica.fetch.min.bytes = 1

broker.rack = null

unclean.leader.election.enable = true

sasl.enabled.mechanisms = [GSSAPI]

group.min.session.timeout.ms = 6000

log.cleaner.io.buffer.load.factor = 0.9

offsets.retention.check.interval.ms = 600000

producer.purgatory.purge.interval.requests = 1000

metrics.sample.window.ms = 30000

broker.id = 0

offsets.topic.compression.codec = 0

log.retention.check.interval.ms = 300000

advertised.listeners = null

leader.imbalance.per.broker.percentage = 10

(kafka.server.KafkaConfig)

[2016-10-09 22:18:41,741] INFO starting (kafka.server.KafkaServer)

[2016-10-09 22:18:41,748] INFO Connecting to zookeeper on localhost:2181 (kafka.server.KafkaServer)

[2016-10-09 22:18:41,781] INFO Starting ZkClient event thread. (org.I0Itec.zkclient.ZkEventThread)

(略)

[2016-10-09 22:18:41,804] INFO Initiating client connection, connectString=localhost:2181 sessionTimeout=6000 watcher=org.I0Itec.zkclient.ZkClient@3e2e18f2 (org.apache.zookeeper.ZooKeeper)

[2016-10-09 22:18:41,821] INFO Waiting for keeper state SyncConnected (org.I0Itec.zkclient.ZkClient)

[2016-10-09 22:18:41,825] INFO Opening socket connection to server localhost/127.0.0.1:2181. Will not attempt to authenticate using SASL (unknown error) (org.apache.zookeeper.ClientCnxn)

[2016-10-09 22:18:41,897] INFO Socket connection established to localhost/127.0.0.1:2181, initiating session (org.apache.zookeeper.ClientCnxn)

[2016-10-09 22:18:42,091] INFO Session establishment complete on server localhost/127.0.0.1:2181, sessionid = 0x157a9971f8c0000, negotiated timeout = 6000 (org.apache.zookeeper.ClientCnxn)

[2016-10-09 22:18:42,093] INFO zookeeper state changed (SyncConnected) (org.I0Itec.zkclient.ZkClient)

[2016-10-09 22:18:42,198] INFO Log directory '/tmp/kafka-logs' not found, creating it. (kafka.log.LogManager)

[2016-10-09 22:18:42,224] INFO Loading logs. (kafka.log.LogManager)

[2016-10-09 22:18:42,233] INFO Logs loading complete. (kafka.log.LogManager)

[2016-10-09 22:18:42,436] INFO Starting log cleanup with a period of 300000 ms. (kafka.log.LogManager)

[2016-10-09 22:18:42,441] INFO Starting log flusher with a default period of 9223372036854775807 ms. (kafka.log.LogManager)

[2016-10-09 22:18:42,457] WARN No meta.properties file under dir /tmp/kafka-logs/meta.properties (kafka.server.BrokerMetadataCheckpoint)

[2016-10-09 22:18:42,509] INFO Awaiting socket connections on 0.0.0.0:9092. (kafka.network.Acceptor)

[2016-10-09 22:18:42,512] INFO [Socket Server on Broker 0], Started 1 acceptor threads (kafka.network.SocketServer)

[2016-10-09 22:18:42,552] INFO [ExpirationReaper-0], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2016-10-09 22:18:42,553] INFO [ExpirationReaper-0], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2016-10-09 22:18:42,638] INFO Creating /controller (is it secure? false) (kafka.utils.ZKCheckedEphemeral)

[2016-10-09 22:18:42,648] INFO Result of znode creation is: OK (kafka.utils.ZKCheckedEphemeral)

[2016-10-09 22:18:42,649] INFO 0 successfully elected as leader (kafka.server.ZookeeperLeaderElector)

[2016-10-09 22:18:42,737] INFO [ExpirationReaper-0], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2016-10-09 22:18:42,739] INFO [ExpirationReaper-0], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[2016-10-09 22:18:42,793] INFO [Group Metadata Manager on Broker 0]: Removed 0 expired offsets in 17 milliseconds. (kafka.coordinator.GroupMetadataManager)

[2016-10-09 22:18:42,801] INFO [GroupCoordinator 0]: Starting up. (kafka.coordinator.GroupCoordinator)

[2016-10-09 22:18:42,802] INFO [GroupCoordinator 0]: Startup complete. (kafka.coordinator.GroupCoordinator)

[2016-10-09 22:18:42,880] INFO New leader is 0 (kafka.server.ZookeeperLeaderElector$LeaderChangeListener)

[2016-10-09 22:18:42,889] INFO [ThrottledRequestReaper-Produce], Starting (kafka.server.ClientQuotaManager$ThrottledRequestReaper)

[2016-10-09 22:18:42,890] INFO [ThrottledRequestReaper-Fetch], Starting (kafka.server.ClientQuotaManager$ThrottledRequestReaper)

[2016-10-09 22:18:42,901] INFO Will not load MX4J, mx4j-tools.jar is not in the classpath (kafka.utils.Mx4jLoader$)

[2016-10-09 22:18:42,945] INFO Creating /brokers/ids/0 (is it secure? false) (kafka.utils.ZKCheckedEphemeral)

[2016-10-09 22:18:42,949] INFO Result of znode creation is: OK (kafka.utils.ZKCheckedEphemeral)

[2016-10-09 22:18:42,955] INFO Registered broker 0 at path /brokers/ids/0 with addresses: PLAINTEXT -> EndPoint(192.168.11.5,9092,PLAINTEXT) (kafka.utils.ZkUtils)

[2016-10-09 22:18:42,956] WARN No meta.properties file under dir /tmp/kafka-logs/meta.properties (kafka.server.BrokerMetadataCheckpoint)

[2016-10-09 22:18:43,046] INFO Kafka version : 0.10.0.1 (org.apache.kafka.common.utils.AppInfoParser)

[2016-10-09 22:18:43,046] INFO Kafka commitId : a7a17cdec9eaa6c5 (org.apache.kafka.common.utils.AppInfoParser)

[2016-10-09 22:18:43,047] INFO [Kafka Server 0], started (kafka.server.KafkaServer)

[2016-10-09 22:19:43,240] INFO [ReplicaFetcherManager on broker 0] Removed fetcher for partitions [page_visit,0] (kafka.server.ReplicaFetcherManager)

[2016-10-09 22:19:43,295] INFO Completed load of log page_visit-0 with log end offset 0 (kafka.log.Log)

[2016-10-09 22:19:43,298] INFO Created log for partition [page_visit,0] in /tmp/kafka-logs with properties {compression.type -> producer, message.format.version -> 0.10.0-IV1, file.delete.delay.ms -> 60000, max.message.bytes -> 1000012, message.timestamp.type -> CreateTime, min.insync.replicas -> 1, segment.jitter.ms -> 0, preallocate -> false, min.cleanable.dirty.ratio -> 0.5, index.interval.bytes -> 4096, unclean.leader.election.enable -> true, retention.bytes -> -1, delete.retention.ms -> 86400000, cleanup.policy -> delete, flush.ms -> 9223372036854775807, segment.ms -> 604800000, segment.bytes -> 1073741824, retention.ms -> 604800000, message.timestamp.difference.max.ms -> 9223372036854775807, segment.index.bytes -> 10485760, flush.messages -> 9223372036854775807}. (kafka.log.LogManager)

[2016-10-09 22:19:43,299] INFO Partition [page_visit,0] on broker 0: No checkpointed highwatermark is found for partition [page_visit,0] (kafka.cluster.Partition)

[2016-10-09 22:28:42,798] INFO [Group Metadata Manager on Broker 0]: Removed 0 expired offsets in 0 milliseconds. (kafka.coordinator.GroupMetadataManager)

3. Topicの作成

$ bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic page_visit

Picked up JAVA_TOOL_OPTIONS: -Dfile.encoding=UTF-8

WARNING: Due to limitations in metric names, topics with a period ('.') or underscore ('_') could collide. To avoid issues it is best to use either, but not both.

Created topic "page_visit".

MacOSXへのActiveMQのセットアップ

MQTTを試したいので、ActiveMQをローカルに入れたいと思います。

1. モジュールの入手

http://activemq.apache.org/ 今は5.14.1 が最新みたいです。

Windows Distributionと、Unix/Linux/Cygwin Distributionがあるので、Unix/Linux/Cygwin Distributionの方をダウンロードして、解凍します。

2. 起動

解凍した場所をACTIVEMQ_HOMEとします。$ACTIVEMQ_HOME/bin配下のactivemq startで起動できます。

$ bin/activemq start INFO: Loading '/Users/rsogo/work/apppot-iot/apache-activemq-5.14.1//bin/env' INFO: Using java '/Library/Java/JavaVirtualMachines/jdk1.8.0_45.jdk/Contents/Home/bin/java' INFO: Starting - inspect logfiles specified in logging.properties and log4j.properties to get details INFO: pidfile created : '/Users/rsogo/work/apppot-iot/apache-activemq-5.14.1//data/activemq.pid' (pid '48834')

ログは$ACTIVEMQ_HOME/data/activemq.logにでているっぽい。

$ tail -f data/activemq.log 2016-10-09 21:35:59,708 | INFO | Apache ActiveMQ 5.14.1 (localhost, ID:******) started | org.apache.activemq.broker.BrokerService | main 2016-10-09 21:35:59,710 | INFO | For help or more information please see: http://activemq.apache.org | org.apache.activemq.broker.BrokerService | main 2016-10-09 21:35:59,723 | WARN | Store limit is 102400 mb (current store usage is 0 mb). The data directory: /Users/rsogo/work/apppot-iot/apache-activemq-5.14.1/data/kahadb only has 24747 mb of usable space. - resetting to maximum available disk space: 24747 mb | org.apache.activemq.broker.BrokerService | main 2016-10-09 21:35:59,726 | WARN | Temporary Store limit is 51200 mb (current store usage is 0 mb). The data directory: /Users/rsogo/work/apppot-iot/apache-activemq-5.14.1/data only has 24747 mb of usable space. - resetting to maximum available disk space: 24747 mb | org.apache.activemq.broker.BrokerService | main 2016-10-09 21:36:00,382 | INFO | No Spring WebApplicationInitializer types detected on classpath | /admin | main 2016-10-09 21:36:00,546 | INFO | ActiveMQ WebConsole available at http://0.0.0.0:8161/ | org.apache.activemq.web.WebConsoleStarter | main 2016-10-09 21:36:00,546 | INFO | ActiveMQ Jolokia REST API available at http://0.0.0.0:8161/api/jolokia/ | org.apache.activemq.web.WebConsoleStarter | main 2016-10-09 21:36:00,615 | INFO | Initializing Spring FrameworkServlet 'dispatcher' | /admin | main 2016-10-09 21:36:00,891 | INFO | No Spring WebApplicationInitializer types detected on classpath | /api | main 2016-10-09 21:36:00,973 | INFO | jolokia-agent: Using policy access restrictor classpath:/jolokia-access.xml | /api | main

3. 管理画面の確認

ログにでている通り、8161ポートでWebConsoleが動いています。

4. キューの作成

5. テストメッセージの送信

Destinationに作ったキューを指定して、Send。

Queuesメニューから先程作ったキューを選択すると、メッセージが入っていることを確認できます。

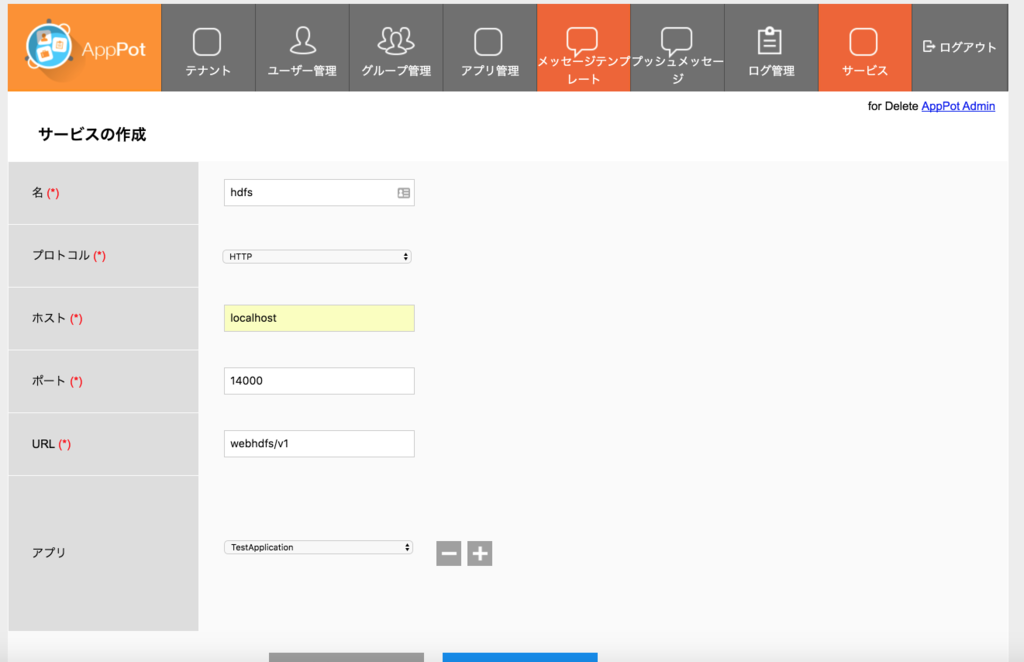

AppPot経由でHttpFSでREST化したHadoopとやり取りする

GetAnonymouseToken

- GETメソッド

http://localhost:8080/apppot/api/1/apppot.TestApplication/1.0.0/anonymousTokens?appKey=40c71254aca44664b61635573085ef1d&deviceUDID=bd393116-0b47-4b9e-a186-e64ffc0fbdf2

- Response

{

"status": "OK",

"errCode": 0,

"description": null,

"results": "e3b82dd1cd964b76a2ee62c0ec97344f"

}

Login

- POSTメソッド

http://localhost:8080/apppot/api/1/apppot.TestApplication/1.0.0/auth/login

- Request

{

"username": "yamada",

"password": "12345678@X",

"appId": "apppot.TestApplication",

"appVersion": "1.0.0",

"deviceUDID": "bd393116-0b47-4b9e-a186-e64ffc0fbdf2",

"isPush": "false",

"companyId": 1

}

- Response

{

"status": "OK",

"errCode": 0,

"description": null,

"apppotInfo": "AppPot Server 2.3.6 ",

"authInfor": {

"userTokens": "e0f08ddd14d3496d978afdb3530fe64f",

"validTime": 1474388126575,

"userId": 2,

"userInfo": {

"account": "yamada",

"firstName": "太郎",

"lastName": "山田"

},

"groupsAndRoles": [

{

"groupId": 1,

"groupName": "開発グループ",

"description": "",

"roleId": 2,

"roleName": "Super Admin"

}

]

}

}

ファイルの状態確認

- GETメソッド

http://localhost:8080/apppot/api/1/apppot.TestApplication/1.0.0/gateway/hdfs/hoge?user.name=rsogo&op=liststatus

HttpFSの検証で作ったファイルをAppPot経由で参照することができました。

- Response

{

"status": "OK",

"errCode": 0,

"description": "",

"results": {

"hoge": {

"FileStatuses": {

"FileStatus": [

{

"pathSuffix": "hoge",

"type": "FILE",

"length": 19,

"owner": "rsogo",

"group": "supergroup",

"permission": "755",

"accessTime": 1474384290147,

"modificationTime": 1474384291294,

"blockSize": 134217728,

"replication": 1

}

]

}

}

}

}

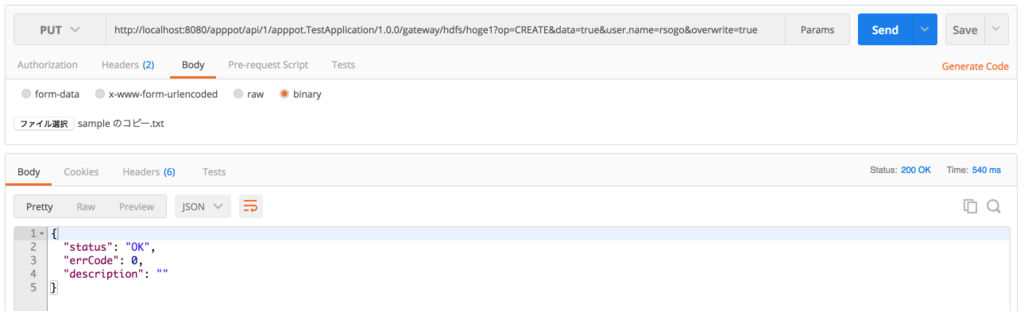

ファイルの作成

- apppot-token: Loginの結果得られたトークン

- Content-Type: application/octet-stream

http://localhost:8080/apppot/api/1/apppot.TestApplication/1.0.0/gateway/hdfs/hoge1?op=CREATE&data=true&user.name=rsogo&overwrite=true

POSTMANを使っていますが、ファイルの添付ができるので、Hadoopに投入したいファイルを選択します。

ファイルの読み込み

http://localhost:8080/apppot/api/1/apppot.TestApplication/1.0.0/gateway/hdfs/hoge1?op=open&user.name=rsogo

{

"status": "OK",

"errCode": 0,

"description": "",

"results": {

"hoge1": "aaa,bbb"

}

}

OK