Flutterのチュートリアルをやるのとメモ

1日目

この辺までやった。

codelabs.developers.google.com

久しぶりにFlutterを触ったらビルドが通らなかったが、Flutterのバージョンを最新にしたら大丈夫だった。

MaterialAppって何者なのというのが疑問だったので、マニュアルで調べてみた。 マテリアルデザインを使って、複数のWidgetをまとめることができるWidgetらしい。 アプリケーションで1つにするのか、画面ごとに1つにするのが良いのかもうちょっと触ってみて考えることにする。

MaterialApp class - material library - Dart API

2日目

ステート周りを理解するが目標。

この辺をやっていく。 docs.flutter.dev

Minecraft Pi EditionをPythonから操作する

最近、Raspberry Piクックブック 第3版を読んでいます。 「レシピ7.20 Minecraft Pi Edition でPythonを使う」で、PythonからMinecraft Pi Editionでブロックを置いたり、チャットを送ったりができるのを試してみました。

ScratchとMinecraftをやっている小学生に次のステップとしてやってもらうと楽しんでもらえそうかなーという感想です。

チャット送る

from mcpi import minecraft, block

mc = minecraft.Minecraft.create()

mc.postToChat("Hello,")

事前にMinecraft Pi Editionを起動しておく必要があります。

ブロックを置く

from mcpi import minecraft, block

mc = minecraft.Minecraft.create()

x, y, z = mc.player.getPos()

for xy in range(1, 50):

mc.setBlock(x + xy, y + xy, z, block.GOLD_ORE)

ブロックの定数定義はここでされています。 mcpi/block.py at master · martinohanlon/mcpi · GitHub

こちらのAPIチュートリアルには他にもいっぱいサンプルがあるので、いろいろやってみたいと思います。 www.stuffaboutcode.com

参考にしたサイト

Raspberry Piクックブックからも参照されていたAPIチュートリアル。 www.stuffaboutcode.com

参考にさせていただいたQiitaの記事。 qiita.com

Raspberry Piクックブック 紹介されている個々のTopicは短いので、Minecraft Piについてはちょこっとしかないが、個人的には幅広い知識が得られて大変気に入っています。

ExecutorServiceを利用して、並列処理を行うサンプル

今やっているアプリケーションの高速化を行うために並列処理を取り入れました。 やっている内容はレポートを作るのですが、APIで取得した100枚以上の画像をレポートに貼り付けるのですが、画像を取得する部分は並列化しています。

サンプルを作ったので、Githubにアップしています。 github.com

冬休みを利用して、解説を書きたいな。

Raspberry piのセットアップ備忘録

いろいろ実験用に使っていたラズパイにMinecraftのサーバーを入れてマルチを遊べるか試そうとしてます。

CUIでセットアップしていく。

キーボードの設定

キーボードはUSBで日本語レイアウトのものを接続した。

デフォルトは英字レイアウトで|などが入力できないので、変更する必要がある。

sudo raspi-configで、コンソールを立ち上げて、international settingでKeyboard settingで変更していく。キーボードは該当のものがなかったので、Microsoftの適当なキーボードを選択して、Japaniseカナレイアウトを選択した。

変更後、再起動で変更が反映された。

WiFiの設定

- アクセスポイントの確認。該当のアクセスポイントがスキャンできることを確認

sudo iwlist waln0 scan | grep ESSID

- アクセスポイントへの接続設定

sudo ifdown wlan0 // NWインターフェイスを停止しておく

sudo iwconfig wlan0 essid {SSID} key s:{パスフレーズ}

sudo ifup wlan0 // NWインターフェイスを起動

下記で、接続したアクセスポイントのSSIDが表示されればOK

sudo iwconfig wlan0

sudo ifdown wlan0 sudo ifup wlan0 ifconfig

AWS SNSを使ったEメールの送信

最終的にはAWS SNSを使ってスマホにPush通知を送信する方法を確認したいですが、その前に簡単にEメールを送信すうる手順を確認したいと思います。

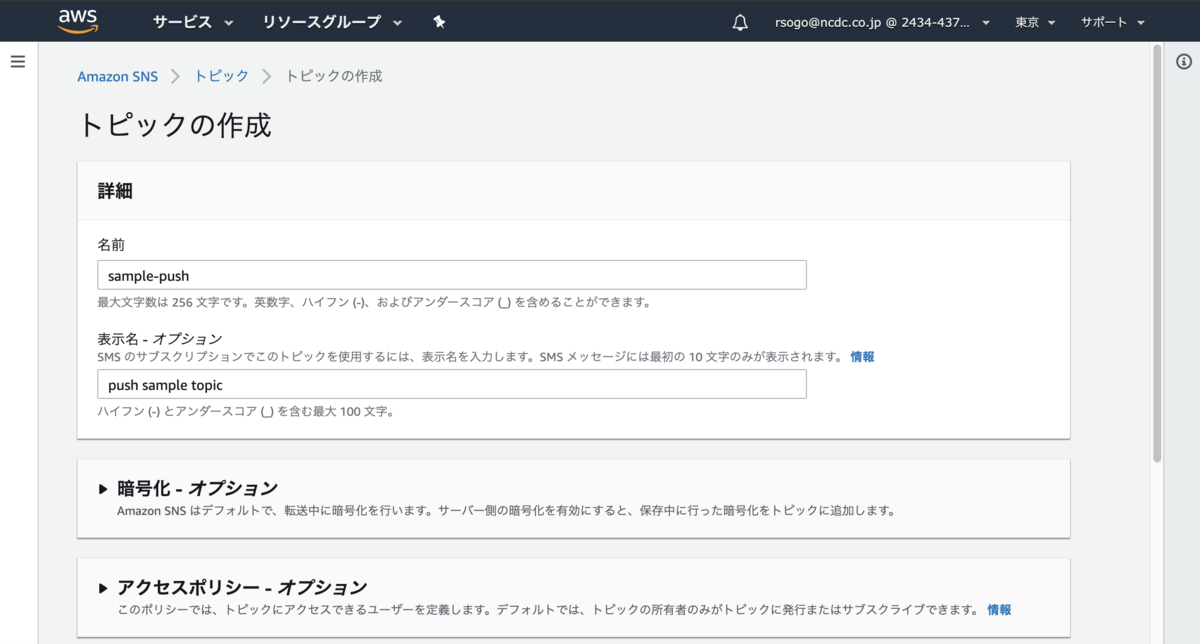

Topicの作成

以下の設定ができます。

- 暗号化

- アクセスポリシー

- 配信再試行ポリシー

- 配信ステータスのログ記録

サブスクリプションの作成

今回はテスト的にEメールを指定して、自分のメールアドレスをエンドポイントに指定してみます。

オプションとして、下記を設定できます。

- サブスクリプションフィルターポリシー

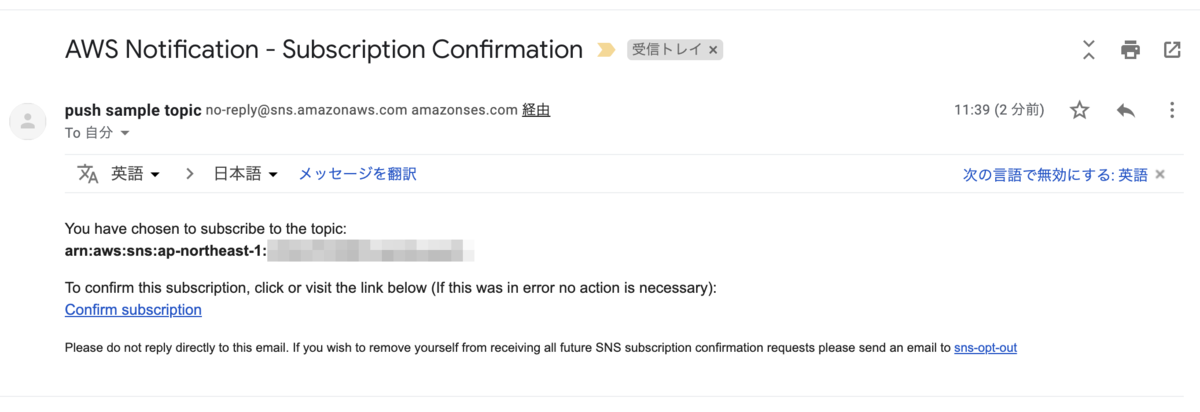

サブスクリプションの確認

サブスクリプションの欄には保留中のエンドポイントとして先程のメールアドレスがリストされています。

下記のようなメールが対象に届いているのでConfirmします。

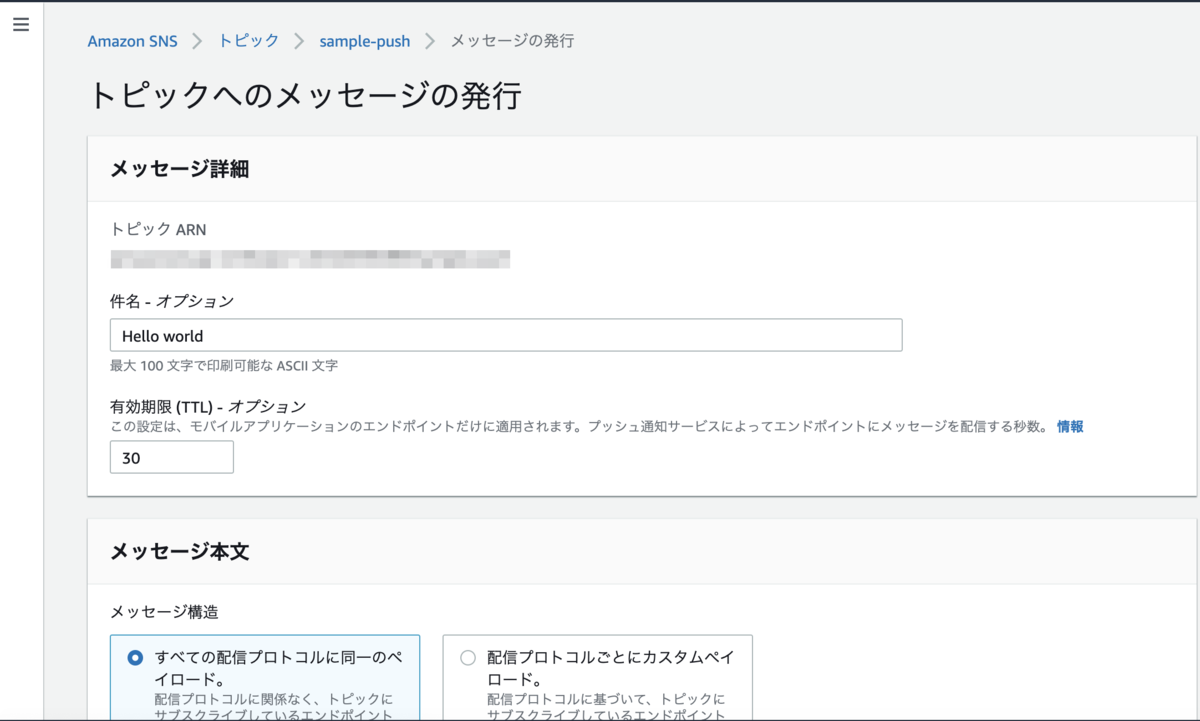

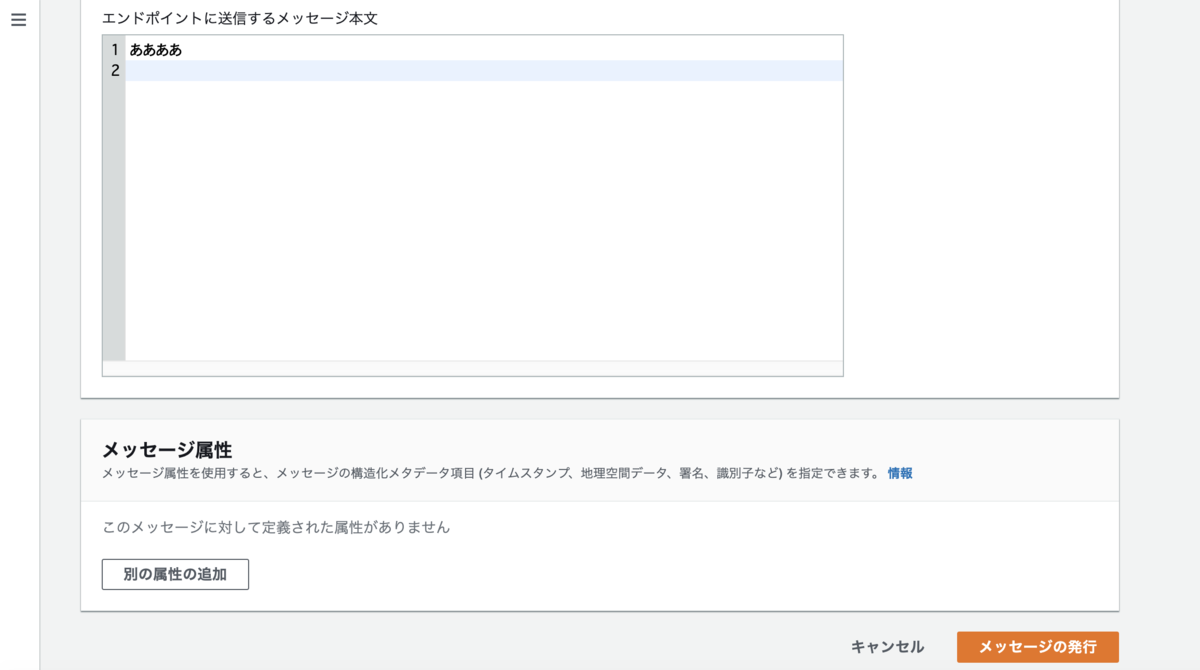

トピックへのメッセージの発行

DynamoDBの勉強をしています

AWS Lambda + DynamoDBの組み合わせで サーバレスなアプリケーションを作ろうとしています。 DynamoDB をはじめとするNoSQLデータベースをちゃんと使ったことがなかったので今回改めて勉強しようと思います。

こちらのAWSで資料をお勧めしてもらいました。

各種設計に関してはマニュアルのベストプラクティスの章が参考になりそうです。 docs.aws.amazon.com

また、こちらのサイトではモデリングについて、パターン分けして詳しい説明を和訳されていて、参考になりました。

AWS Lambdaで必要な外部Jarも含めて一つのJarにする

AWS Lambdaでアプリを作っている時に必要となる外部のクラスが見つからずにjava.lang.NoClassDefFoundErrorが発生するケースがあります。

ビルド時は依存関係は解決できていてコンパイルエラーはでていんですが、これは外部のライブラリがパッケージングの際に含まれていないことが原因でした。

これはビルドツールにMavenを使っている場合はmaven-shade-pluginで解決できます。

マニュアルのIDE なしで Maven を使用した .jar デプロイパッケージの作成 (Java)にも記述があります。

プラグインセクションでは、Apache maven-shade-plugin は、Maven がビルドプロセス中にダウンロードして使用するプラグインです。このプラグインは、デプロイパッケージであるスタンドアロン .jar (.zip ファイル) を作成するために、jar のパッケージ化に使用されます。

stackoverflowでも同様の質問に対して、同じ解決策が出てました。